Kristi Noem Bikini: Why This Search Went Viral, How to Spot Fake Images, and What You Should Know About Deepfakes”

The search term kristi noem bikini sits at the intersection of politics, viral media, and modern attention economics. Kristi Noem is a high-profile U.S. public official, currently serving as Secretary of the Department of Homeland Security, which naturally increases the volume of search interest around her name and imagery.

At the same time, “Kristi noem bikini” as a modifier tends to pull search results toward sensational thumbnails, questionable “exclusive” claims, and repost farms—exactly the sort of ecosystem where context gets stripped and synthetic imagery can spread. This guide unpacks what the trend usually signals, how to assess what you’re seeing, and how to engage with the topic without amplifying misinformation or digital harassment.

| What you see in search results | Typical source | Reliability signals | Common red flags | What to do next |

|---|---|---|---|---|

| Official portraits, press photos | Government sites, reputable outlets, wire services | Clear captioning, date/location, consistent cross-publication | Missing credits, cropped watermarks, no publication info | Prefer original source pages and credited outlets |

| “Viral” images with clickbait titles | Social platforms, content mills | None or weak attribution; often recycled text | “Hold your breath,” “proof,” “leaked,” comments-only links | Don’t share; verify provenance first |

| AI-looking “glamour” thumbnails | Random blogs, video channels, aggregator pages | Rarely any; inconsistent metadata | Unnatural skin texture, odd fingers/jewelry, warped text | Reverse image search + look for first appearance |

| “Removal” or “report” guidance | Platform/help centers | Clear policy language, reporting flows | Upsells, paywalls, shady “takedown” services | Use official reporting tools and documentation |

| “Explainers” on authenticity | Journalism orgs, standards bodies | Methodology, tools, examples | Overconfident certainty, no verification steps | Follow a repeatable verification checklist |

Why certain searches spike around public figures

Search spikes don’t always mean new facts emerged; more often they mean a phrase was repeated at scale—on a platform, in a meme cycle, or via a cluster of accounts pushing the same thumbnail. Public officials draw persistent interest because their image is part of the public record, but the internet routinely reshapes that interest into “visual gossip,” where curiosity becomes clicks.

That matters because “image curiosity” is one of the easiest levers to pull for traffic. When a person’s name is combined with a suggestive keyword, the query can become a magnet for low-quality pages that mirror each other, embed the same recycled video, or point readers into comment sections and messaging apps where verification is practically impossible.

Search intent: what people are really trying to learn

Many users typing this phrase are not explicitly seeking prurient content; they’re trying to understand whether a viral post is real, what the context is, or why the internet is talking about it at all. That’s an important distinction: intent is often informational (“Is this legit?”) even when the wording is sensational.

For publishers and readers alike, treating the query as an authenticity-and-context question produces better outcomes. You can answer what people actually need source, date, provenance, credibility—without turning the page into a rumor amplifier or an objectifying commentary track.

The high-risk zone: clickbait, manipulated images, and synthetic media

The modern “viral image” pipeline rewards speed over accuracy. A screenshot moves from one platform to another, captions change, and the original uploader disappears behind repost accounts. In that environment, manipulated edits and AI-generated images can circulate as “everyone’s talking about this,” even when no credible outlet has verified the media.

It’s also the environment where non-consensual synthetic content can flourish. Search engines and platforms have begun tightening policies around explicit fake imagery and related queries, specifically because harmful deepfakes and deceptive thumbnails became so common.

How to verify a viral image without becoming part of the spread

A reliable verification mindset starts with friction: slow down before you share, bookmark, or repost. The first step is to separate the claim (“this is real”) from the artifact (the image itself). Then ask: where did this file first appear, who posted it, and can that origin be independently corroborated?

Practical guidance from media literacy and reporting communities is consistent: use reverse image search, check whether credible outlets have published the same image with context, and look for signs of manipulation or AI artifacts.

Reverse image search: what it can and cannot prove

Reverse image search is strong at finding earlier copies of the same image, but it doesn’t automatically tell you whether the earliest copy is authentic. What it does provide is a timeline: you can often locate the first high-resolution upload, see whether it came from an official source, and determine whether the image is being repeatedly reposted without context.

Use the timeline intelligently. If the earliest appearances are on low-credibility pages with identical wording, that’s a pattern consistent with coordinated clickbait distribution. If the image shows up in a reputable outlet with a caption, photographer credit, and event context, that’s a materially stronger signal.

Provenance and Content Credentials: the “nutrition label” for media

The internet is moving toward provenance standards that let creators and platforms attach verifiable metadata to media assets. One widely discussed approach is Content Credentials, supported by the Coalition for Content Provenance and Authenticity (C2PA), which provides a standardized way to attach tamper-evident information about a file’s origin and edits.

A useful way to explain it is the coalition’s own framing: “Content Credentials function like a nutrition label for digital content.” When credentials are present and validated, they can help you distinguish original publication from screenshots and re-encodes—though absence of credentials doesn’t automatically mean something is fake.

What major platforms and Google are doing about explicit fakes

Search and social platforms have had to adapt because synthetic and manipulated imagery scales cheaply, while human verification scales slowly. Google has published specific updates and policies aimed at reducing visibility of explicit non-consensual fake content, including stronger demotion and removal mechanisms once a valid removal request is approved.

Google also provides a removal pathway for personal sexual content and artificial imagery in Search, including in-product reporting flows in Image Search. This is important context because some “name + Kristi noem bikini” queries can be adjacent to harmful non-consensual content ecosystems, even when the user didn’t intend that outcome.

Ethics, consent, and why “just looking” still has impact

Even when an image is not explicit, the framing around it can be. The difference between “public image” and “sexualized rumor content” is often the difference between contextual reporting and engagement farming. When a page or post exists mainly to invite objectifying attention, it can function as a form of harassment—especially if the imagery is synthetic or misleading.

Ethical consumption online is not about policing curiosity; it’s about refusing to reward deception. The more users click “proof” thumbnails, the more the market produces them. The alternative is to privilege sources that add context, cite origin, and avoid turning a person’s body into the story.

Political branding and the reality of visual-first attention

For public officials, imagery is not a side issue; it’s part of political communication. Official portraits, event photography, and controlled media appearances are designed to signal competence, authority, and relatability. Kristi Noem’s current federal role also increases the baseline media intensity around her image, because DHS leadership is inherently newsworthy.

The problem is that visual-first attention is vulnerable to distortion. A cropped frame can imply a different moment; an AI-generated thumbnail can suggest a scene that never happened; a recycled “viral” caption can repackage an unrelated image into a trending narrative.

The economics behind “comment bait” and thumbnail traps

A lot of the pages ranking for sensational queries aren’t trying to inform you; they’re trying to route you into engagement loops. Common patterns include “check the comments,” “link in bio,” and “watch to the end,” which shift the interaction into places where accountability is low and content can be swapped after the fact.

This is why verification has to be process-based, not vibe-based. The more emotionally charged the framing, the more you should demand primary sourcing. If a post insists an image is “real” but provides no publication history, no photographer credit, and no corroboration, treat the certainty as a persuasion tactic—not evidence.

How journalists and fact-checkers handle visual claims

Professional verification is less dramatic than people expect. It’s about triangulation: confirming the same asset across independent credible sources, checking event schedules and location details, reviewing metadata when available, and contacting the originating organization when necessary. When that chain can’t be established, responsible outlets either withhold publication or explicitly label uncertainty.

If you want to borrow this mindset, start by asking a simple question: could a reputable outlet responsibly publish this image today with a caption that includes date, place, and source? If the answer is “no,” you have your risk signal.

If you’re targeted by synthetic or sexualized imagery

If you ever find your own name pulled into similar “name + Kristi noem bikini” search behavior, you should know that removal and reporting options exist, and they are improving. Google’s documentation describes processes to request removal of personal sexual content, including artificial imagery, from Search results.

The practical playbook is to document URLs, take screenshots for records, and use official reporting channels rather than paying random “reputation cleanup” services that may simply resell the same request forms. If threats or coercion are involved, preserve evidence and consider legal counsel or local law enforcement guidance depending on jurisdiction.

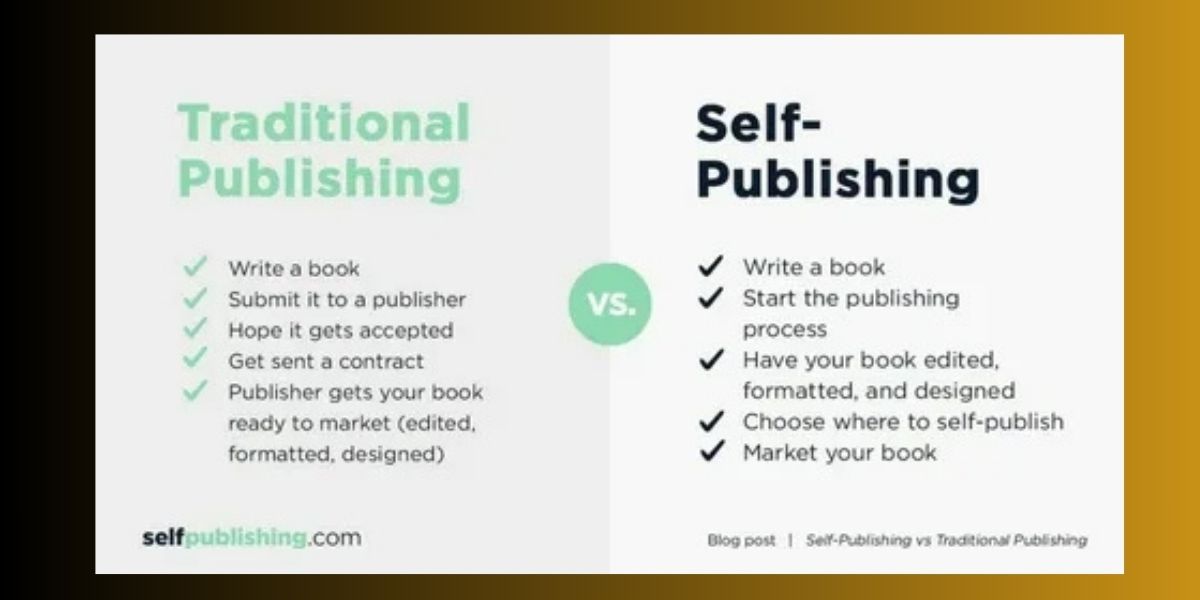

Publishing responsibly around sensitive trend queries

If you’re building a page designed to rank for sensitive queries, the safest long-term SEO strategy is trust: clarify uncertainty, avoid embedding questionable media, and focus on verification steps and authoritative policy references. This aligns with how search engines increasingly reward expertise and devalue pages that exist primarily to host exploitative or misleading content.

Readers stay longer when they feel protected from manipulation. Ironically, the highest-engagement pages in this space are often the ones that tell users what not to click, how to validate what they saw, and where to report harmful material because that’s what users actually need when a trend feels “off.”

Conclusion

The phrase kristi noem bikini is best understood as a signal about the modern web, not a reliable indicator that a specific verified photo exists. When a public figure’s name is fused with a sensational keyword, the result is usually a mix of rumor-driven thumbnails, recycled posts, and occasional synthetic media—most of it optimized for clicks rather than truth.

If you treat the query as an authenticity problem, you can navigate it cleanly: verify the origin, prefer credited sources, understand provenance standards, and use official reporting tools when content crosses ethical or policy lines. That approach protects readers, reduces misinformation, and ultimately creates a higher-quality internet feedback loop.

FAQs

People search trend-phrases for many reasons: curiosity, confusion, and genuine fact-checking. The best FAQ answers don’t feed the rumor mill; they give readers a repeatable method to evaluate what they’ve seen and decide what to do next.

The most helpful posture is calm skepticism. Assume that anything designed to trigger a fast emotional reaction may be engineered for engagement, and treat verification as the main point of the journey—not the thumbnail.

Is the “kristi noem bikini” search about a verified photo?

Not necessarily. In practice, kristi noem bikini often reflects a viral-post cycle where suggestive captions and thumbnails drive clicks, while credible sourcing is thin or missing.

How can I check whether an image connected to this trend is real?

Start with reverse image search to find earliest appearances, then look for publication by credible outlets with captions and credits. That process is more reliable than judging by “how real it looks.”

Why do search engines show clickbait results for “kristi noem bikini”?

Because many sites target the same high-curiosity keywords, and some platforms reward engagement signals that clickbait is good at generating. The phrase kristi noem bikini can be an SEO magnet for low-quality pages competing on sensational framing.

What are Content Credentials and do they prove authenticity?

Content Credentials (C2PA) are a provenance standard that can attach tamper-evident information about origin and edits. They help establish a trustworthy chain of custody when present, but absence doesn’t automatically mean something is fake.

What if someone is being targeted by fake or sexualized images in Search?

Use official reporting and removal tools. Google documents a process for removing personal sexual content and artificial imagery from Search results, which is often the most direct starting point.